|

In the GCP Console, click on "Navigation menu" > "Compute Engine" > "VM instances". If prompted, enable the API for your project. Go to the Compute Engine dashboard: Compute Engine Dashboard You will get $300 in credit to explore the platform.Ĭlick on the project dropdown, then click on "New Project".Įnter a project name, choose a billing account, and click "Create". Create a Google Cloud Platform (GCP) account: If you don't have a GCP account, sign up for a free trial at Google Cloud Platform. You can easily adapt this process for any of the cloud services supported by Rclone (there are loads, check Rclone's Providers)ġ.

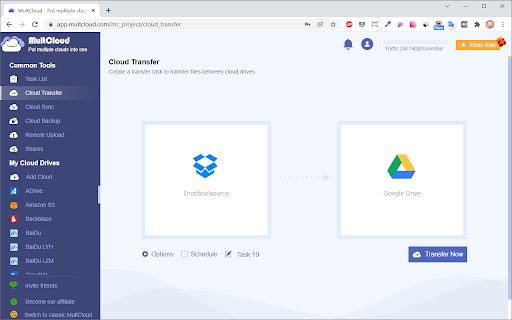

This process is free if you use the initial free credits you get on Google Cloud Platform. Step-by-step guide to migrate data from Dropbox to Google Drive using a Google Cloud VM

(a little modification of the Rclone Browser default flags): C:\Users\Principal\Desktop\rclone\rclone.exe copy "Dropbox:%folder%" "Drive:%folder%" -verbose -transfers 16 -checkers 16 -contimeout 60s -timeout 300s -retries 3 -low-level-retries 10 -stats 1s -stats-file-name-length 0 -stats-one-line -ignore-existing -progress -fast-list This is what I'm running right now, along with a batch file. Heard about "dropbox-batch-size" or "drive-chunk-size".

I've read about better flags to add to my actual command line, but it's still all new for me and I can't figure out a better way to face the migration. The problem is I have only a 50Mbps internet, with tons of folders filled with a whole lot of subfolders and small files, and it's taking forever just to move a folder with 17Gb and more than 70,000 files (bandwidth is not being consumed at full, got stuck at 100-400kbps) Hi everyone, I got the task to migrate 1.5Tb from Dropbox to a Google Shared Drive, that one with unlimited size.

0 Comments

Leave a Reply. |

RSS Feed

RSS Feed